Why Every Financial Institution Should Consider Explainable AI

Why Every Financial Institution Should Consider Explainable AI

March 2021

Financial services companies are increasingly using artificial intelligence (AI) to design solutions that support their business, including assigning credit scores, predicting liquidity balances and optimizing investment portfolios. AI enhances the speed, precision and effectiveness of human efforts related to these processes and can automate manually intense data management tasks.

However, as AI advances, new concerns emerge.

The real challenge is transparency: When people don’t understand the reasoning behind AI models, or only a few people understand the reasoning, AI models may inadvertently bake in potential bias, or fail. A good example of this was the introduction of the iPhoneX, which used facial recognition AI to unlock the phone.

Unfortunately, the iPhoneX AI wasn’t trained on faces from a wide enough spectrum of demographics, resulting in faulty lock functionality in China in which individuals’ phones could be unlocked by their children or coworkers. This led to complaints, refunds and reputational harm.1

Algorithm biases from a lack of transparency is not isolated to just one company or country; this is a global problem. For example, a study from the National Institute of Standards and Technologies (NIST) found that facial recognition AI developed in China, Japan, and South Korea recognized East Asian faces far more readily than Caucasians. The reverse was true for algorithms developed in France, Germany, and the United States, which were significantly better at recognizing Caucasian facial characteristics. This suggests that the conditions in which AI is created—particularly the racial makeup of its development team and test data—can influence the accuracy of its results.2

In general terms, the concept of fiduciary duty requires institutions to act unbiased; if an institution acts in a biased manner, this may constitute a breach of its duties.3

Regulatory Impacts on AI

For financial companies, this can also be a regulatory issue. Pan-national AI equality initiatives have been created by the G20+, and the European Union has drafted AI standards focused on human rights.4 In Asia, the Monetary Authority of Singapore (MAS) has announced the successful conclusion of the first phase of Veritas,5 an initiative to create a framework to promote and strengthen the responsible adoption of AI and Data Analytics (AIDA). BNY Mellon is one of the 25 consortium members for this collaborative work.6

Explainable AI Could Be the Answer

For AI solutions to reach their full potential, the organizations that use them must increase transparency to help stakeholders – whether clients, employees or investors – understand how AI is making decisions. One possibility is Explainable AI, a dedicated research field with growing momentum designed to solve these pressing issues of describing the rationale behind AI decision-making.

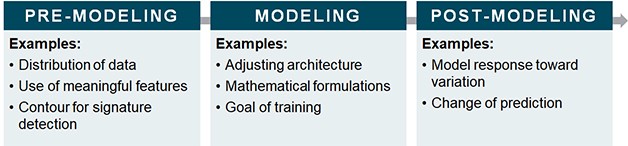

Explainable AI, sometimes called XAI, can be divided into three stages according to the development cycle (see Figure 1). The pre-modeling stage focuses on understanding input data; the modeling stage evaluates the models’ architecture and formulas; and the post-modeling stage provides a clearer picture of the expected output from each stage of AI training.

Figure 1

Source: BNY Mellon

The more robust the AI technology, the more challenging it is to explain its reasoning. The more complex the neural networks designed to execute AI, the less transparent it becomes. This creates a trade-off between explainability and completeness, as shown in a study by the Defense Advanced Research Projects Agency (DARPA).7

In addition, different people require different levels of explanation. Take a photocopy machine as an example: A user may just want to know how to use the machine to make copies, while an engineer may want to know all the details, including the design of the machine. As Explainable AI systems are created, information officers must decide what level of stakeholder understanding is necessary. Will financial institutions need to make their AI platforms explainable to their legal team, their compliance officers, or diversity and inclusion executives?

While standards are still being built, trends indicate that explainable AI in some form will be a necessity. In fact, according to Gartner,8 by 2025, 30 percent of government and large enterprise contracts for purchase of AI products and services will require the use of explainable and ethical AI. The current market for this technology is still, in relative terms, small. In a search for “explainable AI” companies in Crunchbase, companies classified under “explainable AI” accounted for .02% of all U.S. emerging technology companies in their database.9 As the need for these solutions grows, the investment in and resulting technology of explainable AI is likely to follow.

Emerging Leaders in Explainable AI

Players from the old guard and within the startup community are leading the charge in this area, with tech behemoths creating initiatives for promoting and enforcing explainable AI. IBM has OpenScale; Google has Explainable AI; and Microsoft has IntepreML, an explainable AI service through Azure to interpret the behaviors of machine learning models, in addition to the Fairlearn Toolkit, a popular explainable AI toolkit that provides an interactive visualization dashboard and unfairness mitigation algorithms. They are designed to help achieve reasonable trade-offs between fairness and model performance.

There are also several startups in the space, including many designed for the financial services sector. For example, Fiddler Labs recently raised $14M and received a strategic investment from Amazon.10 It incorporates explainable techniques to help underline discrepancies in machine learning models and ensure compliance with industry regulations. Kyndi, which raised $28.5M, offers auditable AI solutions to government, life science, and financial service verticals.11 Arthur AI raised $15M for technology that checks whether organizations’ models are experiencing bias or not performing12; Superwise.AI raised $4.5M for its AI Assurance platform13; Mona, which raised $3M, helps organizations gain transparency into their models14; and Imandra, a cloud-native company that provides an automated reasoning system designed to bring governance to critical algorithms for the financial sector, raised $7.6M from investors such as Live Oak Venture Partners, Albion VC, IQ Capital, and Anthemis.15

We spoke with Sophie Winwood from Anthemis, a U.K.-based growth venture capital firm and member of the BNY Mellon Accelerator Program Venture Capital Advisory Board, about her recent focus on Explainable AI.

“AI remains one of hottest areas to invest in, and has the potential to have a huge impact on the global economy (estimated by PWC to be $15.7trn by 2030).16 However, for AI to live up to the hype, companies, consumers and regulators all need to be able to understand what it’s doing, so they can trust the output.”

She continued, “We’ve all heard the horror stories around when AI goes wrong, such as Microsoft’s AI Twitter chatbot Tay, but the repercussions for regulated industries like financial services and healthcare are much more serious. While this might not seem like a ‘right now’ problem for many in these industries, who are still grappling with how and where to implement AI effectively, the time to start thinking about the controls and oversight around AI is yesterday. I’m personally excited to see what new innovations come out of this nascent but exciting area of tech.”

HOW IT WORKS: TWO EXPLAINABLE AI MODELS

In data science, various models come with different levels of explainability and accuracy. The advanced development of data science is driving towards a complex and high-accuracy direction with an increasing amount of data and computational resources. However, to best showcase explainable AI, we will discuss two of the more simple models: decision trees and Local Interpretable Model-agnostic Explanations (LIME).

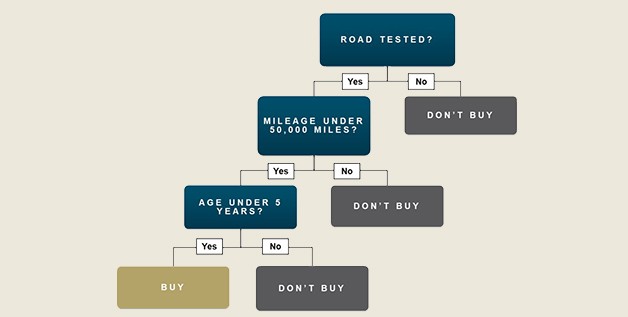

On the most simple level, a machine learning model called decision trees is widely used for classification problems, as it can provide an effective structure to lay out options and investigate the possible outcomes. Classification models can help to choose among several pre-defined paths, and the decision-making process is self-explainable and easily understood by the most non-technical user.

For example, see a basic decision tree in Figure 2, built for documenting the reasoning for purchasing a car based on reliability.

Source: BNY Mellon

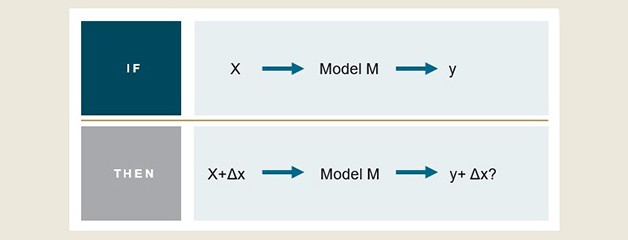

For more complex models, we can use other techniques, such as LIME, which explains how the input features of a machine learning model affect its predictions. This technique is a common practice in engineering. By varying the inputs, the method looks at how the output changes (see Figure 3). However, this might contain a strong assumption that the underlying relationship among the samples is linear, which may not hold true in all cases.

Figure 3

Source: BNY Mellon

LIME can be applied to various models and helps to explain one of the most intrinsic models, neural networks. These are just two of the models, but as AI as a sector expands, so does the technology of Explainable AI.

Conclusion

As the use of AI grows, the issue of transparency will be magnified and explainable AI will become paramount. Combined with the greater use of AI within financial services across the value chain of activities, from deciding who gets a loan to running large portfolios, being able to explain the decision AI makes will be critical. Financial institutions will need to provide evidence for these decisions to regulators, as well as their clients, especially in cases where the AI is wrong. Therefore, integrating explainable AI into institutions’ processes now will pave the way for the future. Moreover, understanding these decisions will enable companies to improve their AI models, reduce biases and lead to overall greater efficiencies and outcomes for all companies within and beyond the financial sector.

This was underscored when we talked with Oren Levy, of Comprendo AI, about why he just recently founded an explainable AI company. He said, “I founded Comprendo AI after extensive research about the ‘black box’ challenge of advanced AI models and neural networks. I realized that there is no future for AI without explainability.”

Are you an explainable AI company that we should know about?

Learn More [link to accelerator program page]

Interested in learning more about emerging technologies for the financial services industry?

Sign-up for The Quarterly Update

Are you an explainable AI company that we should know about? Apply to our Accelerator.

Sign up for the Enterprise Innovation Quarterly Newsletter to learn more about emerging technologies in financial services.

1 Neal, Brandi. “A Woman In China Claims That Her IPhone X Was Unlocked By A Coworker's Face, & It's Raising Questions About Diversity In Tech.” Bustle, Bustle, 16 Dec. 2017, www.bustle.com/p/a-woman-in-china-claims-that-her-iphone-x-was-unlocked-by-a-coworkers-face-its-raising-questions-about-diversity-in-tech-7617858

2 Clare Garvie, Jonathan Frankle. “Facial-Recognition Software Might Have a Racial Bias Problem.” The Atlantic, Atlantic Media Company, 7 Apr. 2016, www.theatlantic.com/technology/archive/2016/04/the-underlying-bias-of-facial-recognition-systems/476991/

3 Laby, Arthur R. "Resolving Conflicts of Duty in Fiduciary Relationships." American University Law Review 54, no.1 (2004): 75-149, https://digitalcommons.wcl.american.edu/cgi/viewcontent.cgi?article=1694&context=aulr

4 European Commission. “Ethics Guidelines for Trustworthy AI.” Shaping Europe's Digital Future - European Commission, 17 Nov. 2020, ec.europa.eu/digital-single-market/en/news/ethics-guidelines-trustworthy-ai

5 “Veritas Initiative Addresses Implementation Challenges in the Responsible Use of Artificial Intelligence and Data Analytics.” Monetary Authority of Singapore, 6 Jan. 2021, www.mas.gov.sg/news/media-releases/2021/veritas-initiative-addresses-implementation-challenges

6 “MAS Partners Financial Industry to Create Framework for Responsible Use of AI.” Monetary Authority of Singapore, 13 Nov. 2019, www.mas.gov.sg/news/media-releases/2019/mas-partners-financial-industry-to-create-framework-for-responsible-use-of-ai

7 Turek, Dr. Matt. “Explainable Artificial Intelligence (XAI).” Defense Advanced Research Projects Agency (DARPA), 2018, www.darpa.mil/program/explainable-artificial-intelligence

8 Haranas, Mark. “Gartner's Top 10 Technology Trends For 2020 That Will Shape The Future.” CRN, 28 Oct. 2019, www.crn.com/slide-shows/virtualization/gartner-s-top-10-technology-trends-for-2020-that-will-shape-the-future/8

9 Searched, “explainable AI” in the crunchbase.com searchbar and only 26 companies were classified out of 13,294 total active companies. Search performed on 1/21/21.

10 “Fiddler Secures Strategic Investment from Amazon Alexa Fund to Accelerate AI Explainability.” Fiddler AI, 25 Aug. 2020, www.fiddler.ai/press-releases/fiddler-secures-strategic-investment-from-amazon-alexa-fund-to-accelerate-ai-explainability

11 “Kyndi - Crunchbase Company Profile & Funding.” Crunchbase, www.crunchbase.com/organization/kyndi

12 “We've Just Raised Our Series A, and the Journey Is Just Beginning.” Arthur AI, 9 Dec. 2020, www.arthur.ai/announcing-series-a

13 “Superwise.ai Secures $4.5M in Seed Funding to Accelerate the Adoption of Its AI Assurance Platform.” Business Wire, 10 Mar. 2020, www.businesswire.com/news/home/20200310005701/en/

14 “Mona - Crunchbase Company Profile & Funding.” Crunchbase, www.crunchbase.com/organization/mona-labs

15 “Imandra - Crunchbase Company Profile & Funding.” Crunchbase, www.crunchbase.com/organization/imandra

16 PricewaterhouseCoopers. “PwC's Global Artificial Intelligence Study: Sizing the Prize.” PwC, 2017, www.pwc.com/gx/en/issues/data-and-analytics/publications/artificial-intelligence-study.html

BNY Mellon is the corporate brand of The Bank of New York Mellon Corporation and may be used to reference the corporation as a whole and/or its various subsidiaries generally. This material does not constitute a recommendation by BNY Mellon of any kind. The information herein is not intended to provide tax, legal, investment, accounting, financial or other professional advice on any matter, and should not be used or relied upon as such. The views expressed within this material are those of the contributors and not necessarily those of BNY Mellon. BNY Mellon has not independently verified the information contained in this material and makes no representation as to the accuracy, completeness, timeliness, merchantability or fitness for a specific purpose of the information provided in this material. BNY Mellon assumes no direct or consequential liability for any errors in or reliance upon this material.

This material may not be reproduced or disseminated in any form without the express prior written permission of BNY Mellon. BNY Mellon will not be responsible for updating any information contained within this material and opinions and information contained herein are subject to change without notice. Trademarks, service marks, logos and other intellectual property marks belong to their respective owners.

© 2021 The Bank of New York Mellon Corporation. All rights reserved.